Soon after the main PACE paper was published in 2011, there were numerous stories in the media about the dangerous militant ME patients who were harassing the researchers. It is known that the Science Media Centre was heavily involved in promoting this coverage. Many ME patients have suspected that these stories were part of a campaign to discredit critics of the trial. There is no question that it has affected how ME has been covered, part of what has been called the epistemic injustice (here and here) suffered by patients.

One of the main criticisms of the trial was that outcome thresholds were switched after the trial had finished. Some data were released after a First Tier Tribunal hearing. Reanalyses using the original thresholds found:

And:

This week, three of the researchers responded to the reanalyses. This week, also, Reuters carried a story saying that one of them, Michael Sharpe, had been driven out of researching the illness because the field is ‘too toxic’. The timing did not seem coincidental.

The article claims that Professor Sharpe is not alone and that ‘there has been a decline in the number of new CFS/ME treatment trials being launched’. It quotes evidence from clinicaltrials.gov:

From 2010 to 2014, 33 such trials started. From 2015 until the present, the figure dropped to around 20.

I was surprised by that figure as research couple of years ago found an increase in the number of papers published on the illness. Along with the trend in medical science generally, more work was being done.

My first thought was that perhaps there was an innocent explanation for this decline: PACE was a massive trial, deemed ‘definitive’. The investigators were seen as the leading experts on this approach and the findings were said to be in line with other trials of the interventions. There wouldn’t seem much point in keeping on doing the same trial time after time.

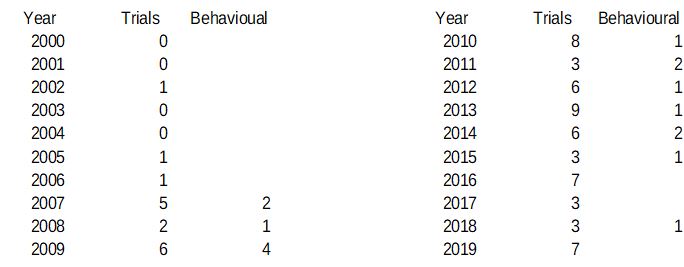

I decided though, that I would also go to the website and check the numbers. And this is what I found:

The figures given in the article then are not false, but they would seem to be misleading. The overall numbers are very low, so a year when there are more trials than usual will have a large effect. Kelland chose to compare the last five years with the previous five years. But if you compare the last ten years (and, after all, the first stories about harassment came out in 2011, so ten years would seem just as reasonable), then there has in fact been a massive increase in trials being launched, not the decline described in the article. Or one could be very mischievous and point out that since Professor Sharpe announced he was quitting the field last year, the number of trials has gone up.

There is a further point. The article says that he and others are being driven out of research because some patients do not like their approach as it ‘suggests their illness is psychological’. No one researching ME as a pathological illness has made such complaints. It would be expected then that trials taking this behavioural approach should show a decline and that others, looking at possible drug treatments, for example, would be unaffected. As the table shows, though, there is no such trend.*

Finally, the number of trials listed seemed very low to me. I looked at some of them. They include acupuncture studies from China but as far as I could see they don’t show the PACE trial (or SMILE), so the numbers are probably not very reliable anyway.

An article standing up for the PACE researchers uses dodgy numbers.

As for the substance of Professor Sharpe’s accusations, he has himself described what he finds offensive: ‘attempts to damage his reputation and get his papers retracted’. Many would not agree that amounts to trolling.

It should also be pointed out that he himself has been described as ‘intemperate’; someone who ‘lashes out’ and who ‘cannot tell the difference between disagreement and defamation’.

Claims of bullying and harassment driving researchers from the field are often made by those with power against those without:

Added 17/03/2019:

*It has been suggested that there is in fact a discernible trend in the lower numbers of behavioural trials, but there is no way of knowing whether there is a trend or whether there was a slight blip in 2009 or whether it is possible to know anything at all from such small numbers. It could be argued, for example, that one third of all clinical trials for ‘CFS/ME’ in 2018 were behavioural.

The same point about the reliability of the search applies, since neither PACE nor SMILE are listed.

And even if there were some sort of trend, there is no way of knowing the impact, for example, of the IOM report and the change in focus by the NIH.